The typical mobile website experience is horrible. Everyone knows that, right?

The typical mobile website experience is horrible. Everyone knows that, right?

Sure, it’s easy to find some terrible mobile website experiences, but are mobile websites systematically worse that their desktop counterparts?

Now that 80% of all adults who go online own a smartphone this is an important question to answer.

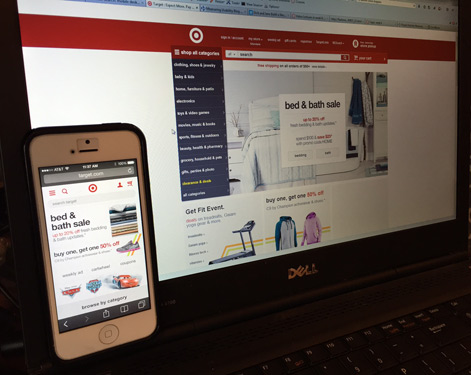

Mobile websites often look like stripped-down versions of their desktop alternatives, so it’s easy to see why there’s an assumption of more difficult experiences. In some cases, these limited sites are a result of a stop-gap effort to have a mobile solution. In other cases, a deliberately minimalist “mobile-first” effort produces these mobile designs. And of course the very limited real estate means it’s harder to read and see content, but it also means there’s no room for banner ads, hero images, promotional images, carousels, and other design elements typically included on sites with more real estate.

It seems reasonable to assume that, because of real-estate constraints, users would typically find mobile shopping more difficult than desktop shopping. So how much worse is the online mobile experience?

In a study we conducted recently, our client’s mobile website outscored their desktop website in a number of task and test measures. Was this a fluke, or is it possible that mobile sites can offer a better experience than their desktop counterparts?

It wouldn’t be the first time we’ve been surprised by more limited designs. We’ve found, on a couple of tree tests, that users are able to find items easier using just the navigation structure compared to the full website with in-page navigation and search. This suggests that the unconstrained design makes it harder—not easier—to find items.

Data Comparing the Mobile and Desktop Experiences

We expanded our dataset to comparable ones in the retail domain and the automobile-information domain. We collected the mobile-based data and the desktop-based data either simultaneously or with a gap of no more than a few weeks. We used different participants (between-subjects) for the mobile and desktop versions. All participants spent time interacting with their respective websites, and then all answered the same questions about the tasks and about the overall experience.

We examined mobile and desktop data from 7 websites. Data was collected from 3,740 participants, with the average number per website at 312; one exception was a mobile study with just 20 participants.

The same tasks were attempted on both mobile and desktop websites. The tasks involved either (a) participants locating a specific product or information or (b) participants given a set of parameters and then locating any product that matches the parameters. We collected data on task-completion rates, task ease, and task time. At the end of the experience, participants answered the SUPR-Q, which resulted in measures on overall quality, usability, trust, appearance, loyalty, and the Net Promoter Score.

Overall Perception Data

Of the 42 post-study SUPR-Q scores, 23 (55%) actually had higher scores for mobile than desktop. This difference though is not statistically significant (p = .64).

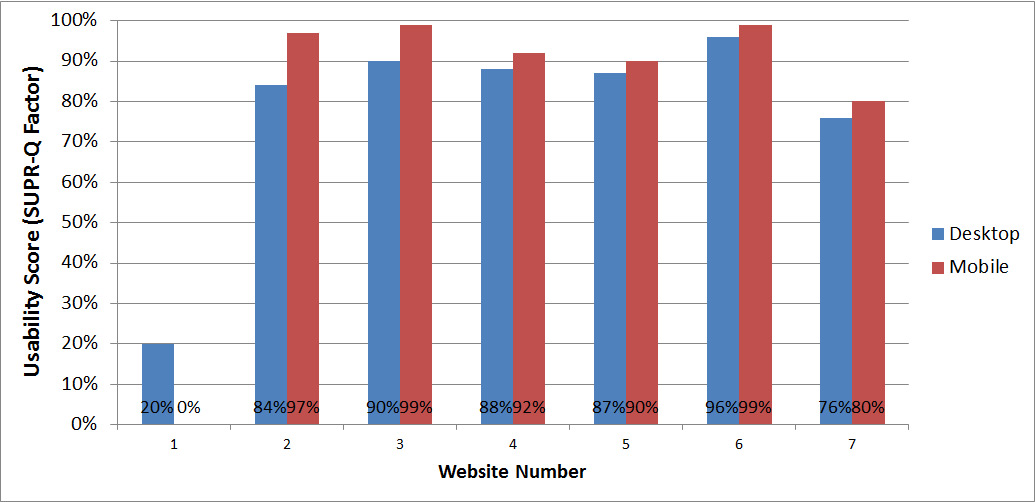

When we limit the post-study measures to just the usability factor of the SUPR-Q, 6 of the 7 websites had higher ratings on the mobile experience. The difference here is statistically significant (p = .08) at the significance level of alpha = .10. See Figure 1 below.

Figure 1: Comparison of post-study usability scores between 7 desktop and mobile websites. Six of the seven websites had higher usability scores on the mobile experience.

In other words, users’ perception of the usability, for the most part, was of a mobile-website experience that’s superior to the desktop-website experience. For the six websites that had higher mobile usability scores, the average difference was 7%. For the website with the superior desktop experience—the study with the sample size of 20—the difference was 20%.

Task Performance Data

Perception of usability is different than performance usability. There is usually a solid correlation between what people think and do, but it’s not high enough that attitudes are a replacement for actions–which is why we recommend recording both.

Across all 50 task metrics, 20 (40%) showed higher scores on mobile; again, this proportion isn’t statistically significant (p = .21).

Of the 14 tasks that had task-time data, 11 took longer on the mobile website. Not only was this difference statistically significant (p=.04), but the same tasks took, on average, 81% longer on the mobile. So something that took 100 seconds on the desktop experience was taking about 181 seconds on the mobile website. However, the bulk of these time differences came from tasks with more open-ended parameters (e.g. finding a product for a beach vacation). On the tasks (data from one study only) with only one solution—e.g., find a blender under $40—three of the four tasks took 10% longer on the desktop than on the mobile.

Technical Note: The tasks measures aren’t independent, which is a violation of one of the assumptions in the statistical tests. Therefore the task-based p-values are likely inaccurate (lower than they should be). But they provide some idea about the expected pattern we may see if independence is accounted for. The comparison of the usability scores across studies, though, is independent–one measure for each study.

Conclusion

Thoroughly answering the question of whether mobile websites are, in fact, less usable than their desktop counterparts requires a larger dataset from more types of sites, tasks, and industries. However, even with this limited dataset, some interesting patterns emerge:

- There is some evidence that task performance is actually better on the mobile as compared to the desktop websites.

- Tasks generally took longer to complete on the mobile websites, although not always. All the pinching and scrolling takes its toll. While not all tasks take longer, many tasks took around twice as long on the mobile website.

- Overall perception of website usability was actually higher on 6 of the 7 mobile websites. Despite the nominally worse task-performance (time especially) it may be the case that users have lower expectations when using their mobile phone and thus rate it higher.

In addition to examining a larger set of mobile websites, future studies might also look at additional controls related to the types of participants. That is, despite the large increases in mobile website usage, maybe mobile participants differ qualitatively from desktop participants. We know, for example, that participants tend to skew younger on mobile; this may affect perception and performance. So it’s likely that some of the difference in the results comes from measuring different (likely younger) people.

A future study can control for this variability by either equating the samples or performing a within-subjects approach (same participants on mobile and desktop), and by examining more websites. For now, we may need to reconsider the conventional wisdom about mobile experiences being universally worse.

We have more studies planned; we’ll keep you posted!