Cases spike, home prices surge, and stock prices tank: we read headlines like these daily. But what is a spike and how much is a surge? When does something crater versus tank or just fall?

Cases spike, home prices surge, and stock prices tank: we read headlines like these daily. But what is a spike and how much is a surge? When does something crater versus tank or just fall?

Headlines are meant to grab our attention. They often communicate the dramatic story the author wants to tell rather than what the data say.

It isn’t easy to write headlines.

The Columbia Journalism Review regularly collects ambiguous headlines, such as these from 2018:

- Netflix Misses Subscriber Mark

- City Manager Tapes Head to District Attorney

- Celebrity Surprises 6-Year-Old with Epilepsy

- Students Cook and Serve Grandparents

These headlines are ambiguous due to their telegraphic style, keeping headlines short by dropping function words that would otherwise clarify their meaning.

Another way in which headlines can be ambiguous is in their use of verbs that describe changes in magnitude without including the actual amount of change. For example, these headlines:

- Why the Stock Marked Tanked Today

- Coronavirus Case Numbers Spiking Again

- Giants Playoff Chances Jumped Wild Percentage in Three Weeks

- US Stocks Fall as Market Decline Extends for Third Week

As we read these headlines, verbs such as tank, spike, jump, and fall indicate a change in the magnitude of something, implying a direction for that change (increasing or decreasing) and different intensities (“tank” suggests a greater change than “fall”). It isn’t clear, however, how these types of verbs are interpreted by readers. Complicating things further, those who write headlines tend to spice them up by using intense verbs to grab attention. At best this practice leads to more awareness of the news, but at worst it can instill panic and fear.

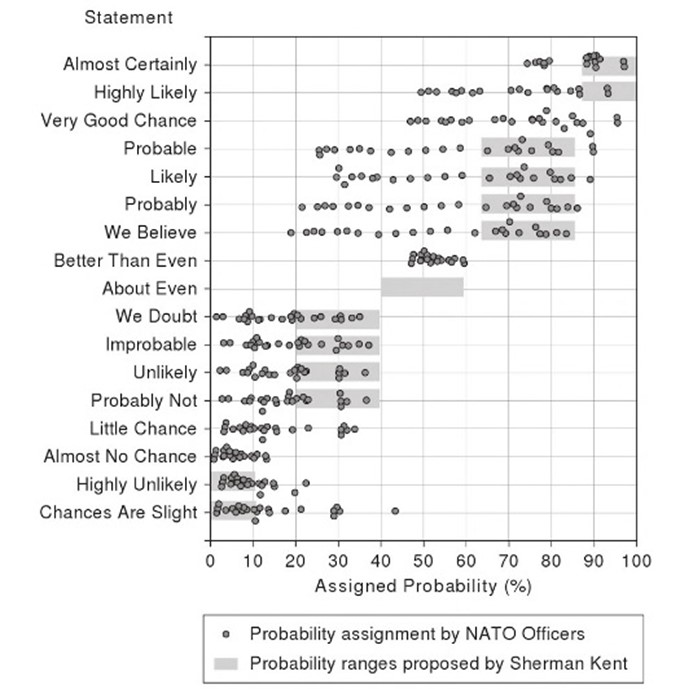

This concept is related to the ambiguity of expressions of uncertainty. Figure 1 shows a popular infographic that graphs judgments of estimated probability for various probabilistic expressions. The figure juxtaposes the judgment of an expert CIA analyst (Sherman Kent) who made recommendations in the 1950s about the ranges that analysts should consider when using the terms, with dot plots of the judgments of 23 NATO officers. The data show the rough rank order of the terms from low to high estimated probability and the variability of the officers’ judgments.

Inspired by this infographic, we decided to conduct an experiment to create a similar chart for verbs that express changes in magnitude through different directions (increasing vs. decreasing) and intensities.

Figure 1: Assignments of probabilities to statements (from Scott Barclay et al., Handbook for Sherman Kent, “Words of Estimated Decision Analysis” and Donald P. Steury et al., “Probability,” in Sherman Kent and the Board of National Estimates: Collected Essays, Washington DC, Center for Study of Intelligence, CIA, 1994).

Experimental Design

In October 2020, 498 US-based online panel participants rated 12 change verbs in four contexts with one of two rating formats.

Half of the verbs are typically associated with decreasing amounts (cratered, tanked, fell, dropped, slumped, and plummeted) and the other half with increasing amounts (spiked, grew, jumped, rose, surged, and soared).

The four contexts were the COVID-19 pandemic, sports, politics, and stocks. Each respondent was randomly assigned to either a form field in which to type a number (no limit on the amount) or to a slider that ranged from 0 to 100.

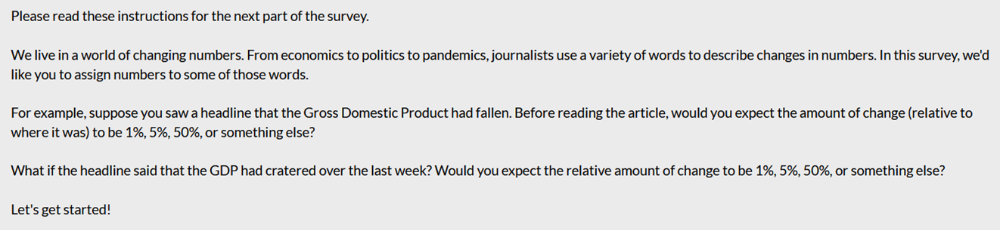

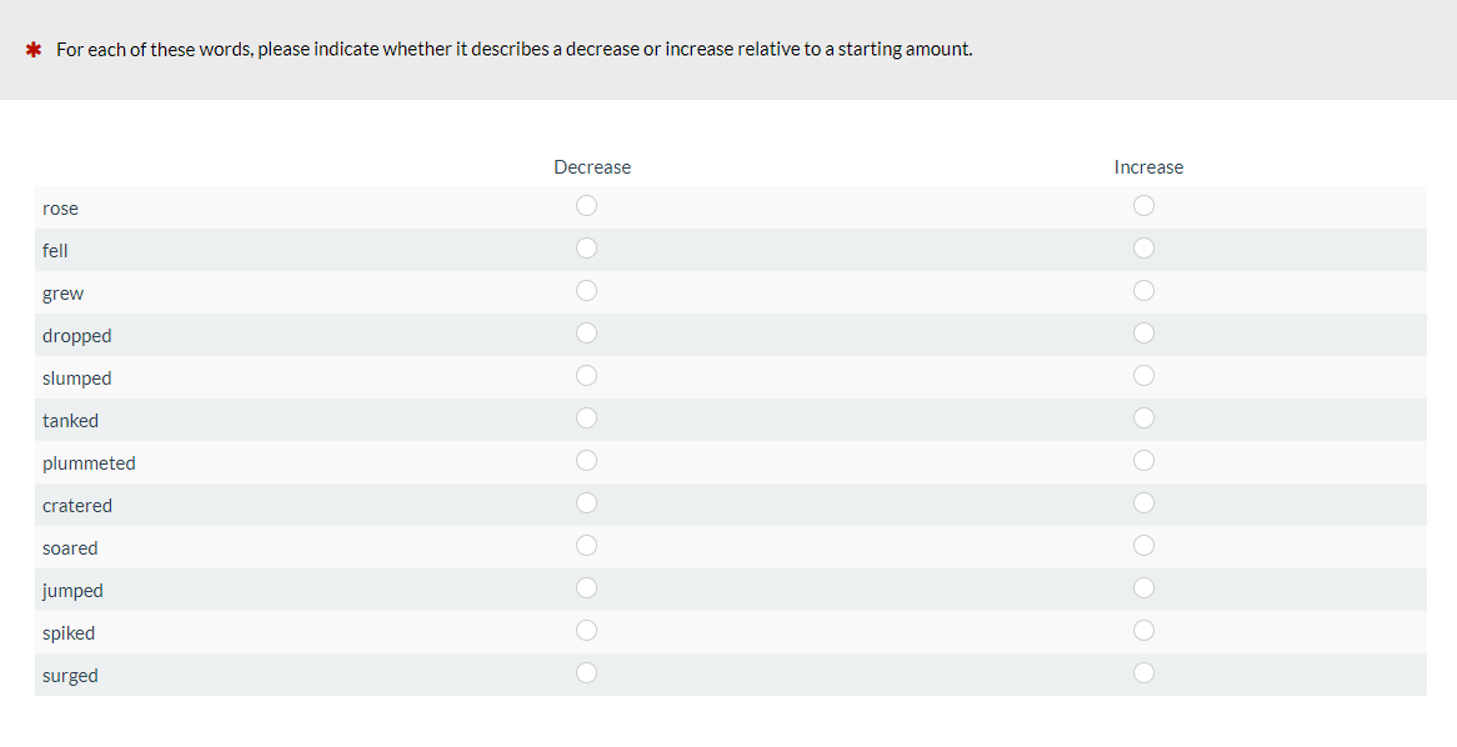

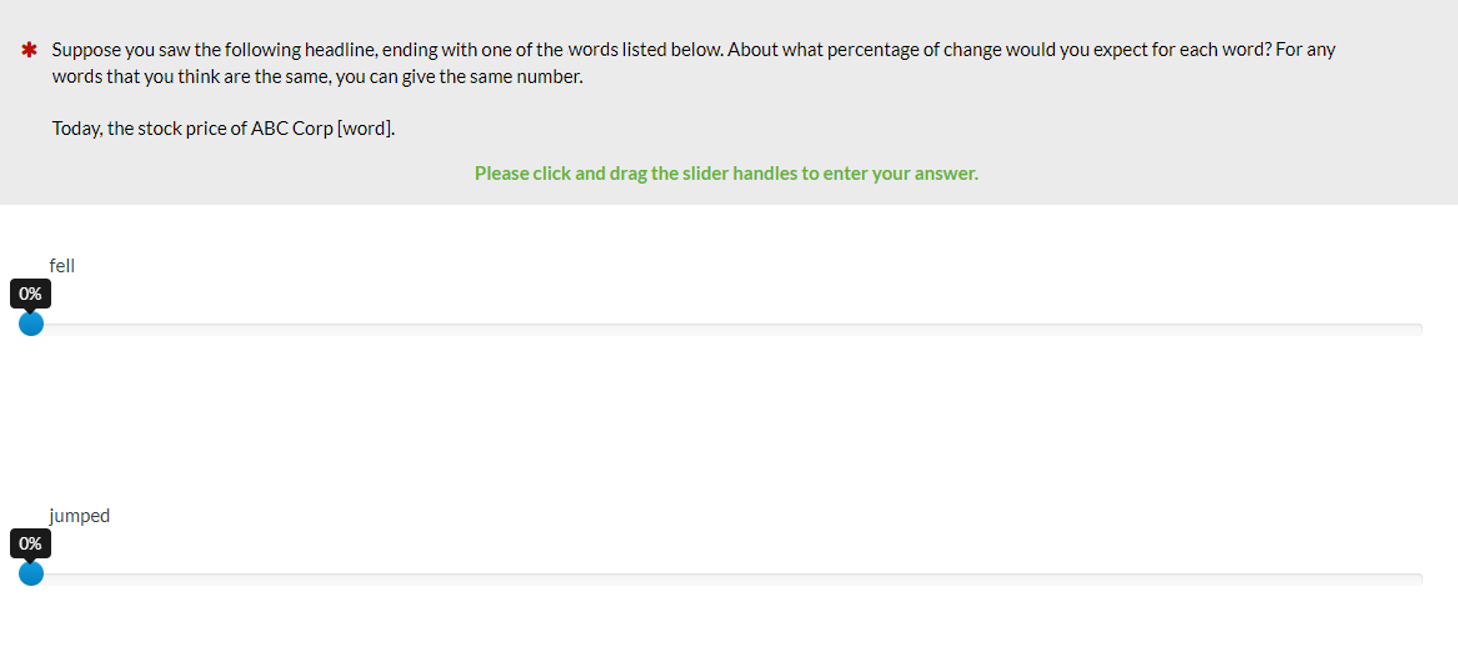

Across four contexts and 12 verbs, each respondent made 48 assignments of a specific percent amount of change, one for each verb in each context. The order of presentation of contexts and verbs was randomized. Respondents also indicated how they interpreted each verb as either indicating a decrease or an increase. Figure 2 shows examples of instructions and items from the MUIQ survey.

Figure 2a: Survey instructions.

Figure 2b: Interpretation of direction of change verbs.

Figure 2c: Judgment of intensity assigned to change verbs (other contexts were “Over the past week the percentage of the US population that is currently infected with COVID-19 …”; “Over the past 50 games the winning percentage of the Washington Nationals …”; “Yesterday the favorability percentage of Senator Foghorn …”).

Results

Data Cleaning

We weren’t sure how well people would understand the task despite our directions and multiple input options, so we paid particular attention to unusual response patterns, resulting in the correction of 6 cases and removal of 44 cases:

- Six respondents entered negative numbers for verbs associated with a decreasing amount. We changed negative to positive numbers and retained those cases.

- Forty-one respondents matched the expected direction implied by the verbs less than 75% of the time (i.e., matched fewer than 9/12). We deleted those cases.

- Three respondents provided estimates greater than 100 in the text field. Because the slider condition was limited to 100 and this happened to less than 1% of the sample, we deleted these three cases. In this situation, it wasn’t that these were wrong; in fact, it’s surprising that more people didn’t provide estimates higher than 100. After all, if something triples in value, it’s a 200% increase, but percent increases are likely harder to both interpret and communicate.

We conducted our analyses on the remaining 454 cases.

Distributions of Estimates

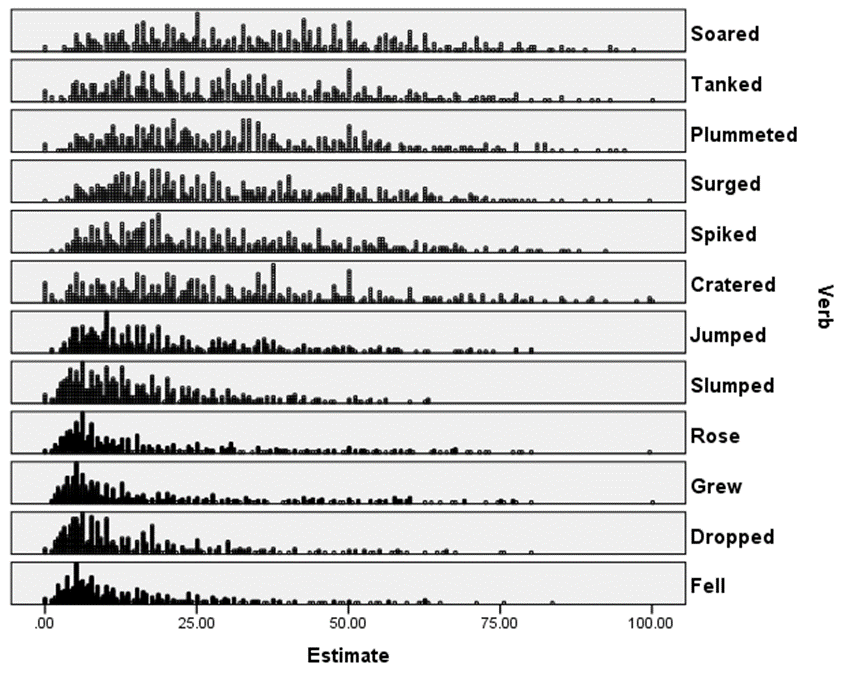

To get an overall picture of the results like those shown in Figure 1 for assigned probabilities, we graphed a dot plot of the results for each verb, averaging across contexts and item formats (Figure 3). All distributions had a right skew, and as the intensity of the verbs increased, the dispersion increased.

One of the first things we noticed was the dispersion was noticeably higher than the Sherman Kent probability estimates from Figure 1. This is likely a function of using a larger sample size, participants untrained in communicating probabilities or risk, and words that are potentially more ambiguous.

Figure 3: Assignments of estimated magnitudes to change verbs sorted from lowest to highest absolute values.

Means Higher than Medians

Table 1 shows the medians, means, standard deviations, and skewness for the data in each row of Figure 3. Consistent with the right skewness of the distributions, the means were consistently higher than the medians. Dispersion in verb intensity also showed up in the standard deviations, increasing from 14.4 for “fell” to 21.4 for “soared,” and in the skewness (deviation from symmetry), decreasing from 1.84 for “fell” to 0.54 for “soared.”

| Verb | Median | Mean | Std Dev | Skew |

|---|---|---|---|---|

| Soared | 32.5 | 36.0 | 21.4 | 0.54 |

| Tanked | 29.0 | 32.3 | 20.8 | 0.68 |

| Plummeted | 27.5 | 32.6 | 21.4 | 0.93 |

| Surged | 27.5 | 31.9 | 20.2 | 0.73 |

| Spiked | 26.8 | 31.1 | 20.9 | 1.03 |

| Cratered | 26.3 | 31.5 | 22.1 | 0.87 |

| Jumped | 16.3 | 22.2 | 16.9 | 1.20 |

| Slumped | 12.5 | 16.9 | 13.2 | 1.25 |

| Rose | 10.8 | 18.6 | 18.5 | 1.53 |

| Grew | 10.0 | 18.4 | 18.6 | 1.58 |

| Dropped | 10.0 | 15.5 | 14.8 | 1.81 |

| Fell | 10.0 | 15.1 | 14.4 | 1.84 |

Table 1: Overall medians, means, standard deviations, and skewness for the 12 verbs.

Verb Groupings

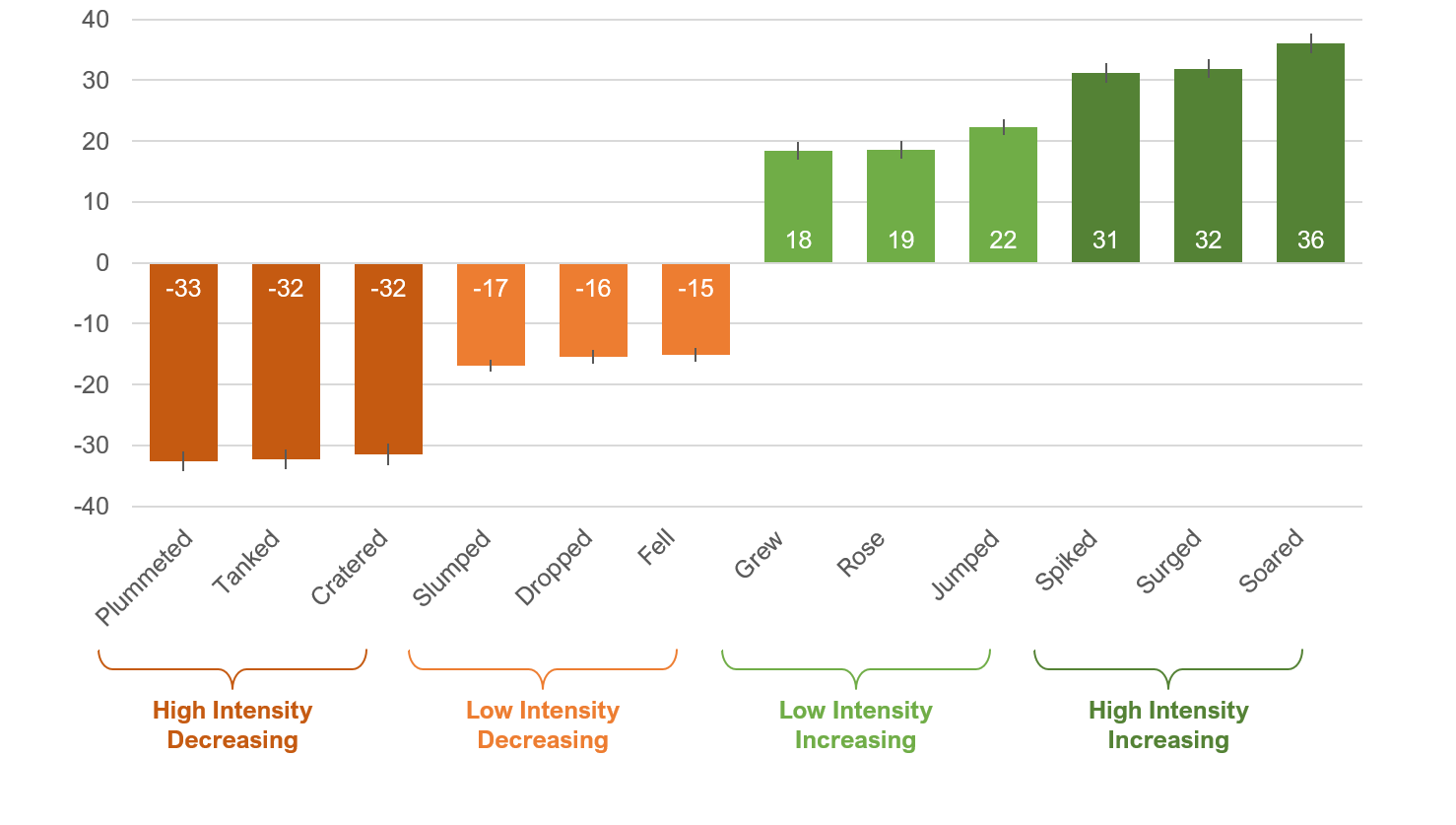

Figure 4 shows the 12 verbs arranged in ascending order after converting the means of verbs associated with decreasing amounts to negative numbers.

Figure 4: Overall means and 90% confidence intervals for the 12 verbs.

The verbs roughly into four rough groups based on direction (decreasing vs. increasing) and intensity (less vs. more intense):

- High intensity decreasing: Plummeted, Tanked, Cratered

- Low intensity decreasing: Slumped, Dropped, Fell

- Low intensity increasing: Grew, Rose, Jumped

- High intensity increasing: Spiked, Surged, Soared

Context and Input Format Had an Impact (Mostly)

Analysis of the data using ANOVA found significant main effects for Verb (see Figure 4), Context (sports numbers were slightly higher than other contexts), and Format (numeric entry values were markedly lower than slider values). There were also significant Verb by Format and Verb by Context interactions, but the Context by Format and Verb by Context by Format interactions were nonsignificant. We’ve focused on the main effect of Verb in this article, and are planning to dig into the other significant main effects and interactions in the future.

Summary and Discussion

Unlike mathematics, much of natural language is ambiguous, including expressions of uncertainty and change. We see this ambiguity daily in headlines that report a change of some type without including the actual amount of change (e.g., “Coronavirus Case Numbers Spiking Again”).

To help understand how people interpret the magnitude of such claims, we analyzed estimates from 454 US-based respondents on 12 verbs in four contexts, using either sliders or open numeric fields. We found

There were four clusters of change. Despite the wide range in estimates in verbs (which we somewhat expected), it was interesting that the verbs tended to fall into four groups defined by increasing/decreasing direction and lower/higher intensity: Plummeted, Tanked, Cratered; Slumped, Dropped, Fell; Grew, Rose, Jumped; Spiked, Surged, Soared.

Estimates varied a lot. Assignments of estimated magnitudes to change verbs were all over the place, especially at higher levels of intensity, making it difficult to precisely say how intensity relates to specific change percentages. This suggests that a substantial portion may differ in how they interpret the change by as much as two or three times. For example, 10% to 20% may interpret “soared” to imply a <25% increase while an equal percent may interpret it as >50% change.

Estimates with 100%+ changes were rare, even with open text fields. Only three respondents provided estimated values above 100%, which indicates a doubling of change (e.g., 10 to 20 is a 100% increase). The dearth of 100%+ estimates could be because of the contexts participants encountered or the difficulty in communicating and understanding changes in percent versus percentage point changes (i.e., absolute vs. relative magnitudes of change).

There was surprisingly little difference between contexts. Despite using different contexts to generate estimates (pandemic, sports, politics, and stocks), only sports elicited statistically different estimates, suggesting change estimates in politics, pandemics, and stocks may be conceptualized similarly.

Estimates had a strong right skew and long tail. Estimates tended to cluster in the lower range with a few values scattered into a long tail, with that skewness more pronounced for lower-intensity verbs due to a larger number of assignments at the lower end of the scale.

Numeric entry values were markedly lower than slider values. We had anticipated that giving respondents a fixed range with a slider would constrain estimates. Surprisingly, however, we found it was open-ended numeric input fields where respondents had no constraints that tended to elicit lower change estimates (whether or not the analysis included the three cases that contained 100%+ changes). We’ll investigate this more in a future article.

Match the intensity of the verb with the data. The main takeaway from this research, whether you research user experience or write news headlines, is to match the intensity of the verb you choose to the data you’re reporting, at least concerning lower or higher intensity. Small increases in these contexts should not be described using verbs from the two extreme categories (e.g., soaring, spiking, tanking, or plummeting). Doing so dilutes their meaning, likely adds confusion, and when relatively large increases occur in the same context, allows fewer ways to clearly and honestly communicate the change without adding prefixes such as super and uber (e.g., super-surge).