Whether you’re conducting an early stage test of a prototype or late validation, these five tips can make any usability test more credible. The tips both temper skepticism about small samples and help you avoid overstating your findings.

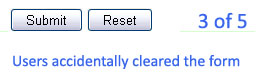

- Count the number of users that experience each problem. Early testing is all about finding and fixing usability problems. But make those problem lists even more helpful by providing the number of users that encountered the problem. For example 3 out of 5 or 5 out of 7. These numbers will be crucial for estimating impact, prioritizing and for use in future comparisons.

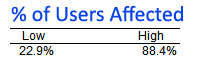

- Estimate problem impact using confidence intervals: Knowing how many users encountered a problem allows you to estimate the potential impact on all users. Confidence intervals work on any sized sample to provide your best estimate. For example, for a design issue that 3 of 5 users encountered would tell you between 23% and 88% would also have the same problem. While the confidence interval is wide, it’s highly improbably fewer than a quarter of the users wouldn’t encounter this problem. Problem frequency can be used in conjunction with severity for prioritizing problems as there’s never enough time or money to fix everything. Use the Problem Frequency Calculator to compute the interval.

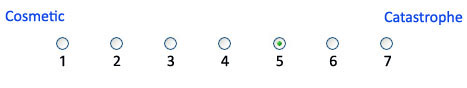

- State a problem’s severity separate from frequency: Not all problems are equal. Assume frequency and severity are independent. Some design problems can lead to crashes, data loss or nuclear meltdowns (OK, the last one is not something you or anyone conducting a test with small sample sizes will ever deal with but you get the point). Rate your severity using a scale with at least 3 categories. You need to distinguish between the trivial many problems and the critical few. I’m a believer that having somewhere between 5 and 11 points on a severity scale will give you enough points of discrimination without going overboard.

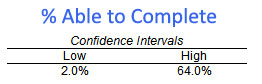

- Use completion rates: Can users complete the task Yes or No? Task completion rate is the simplest yet most fundamental usability metric. It really takes little effort to collect. In early testing when finding and fixing problems is the focus it’s easy to neglect completion rates. Completion rates can be used to justify why issues are legitimate and not false alarms. There are often disagreements about whether a design element is a feature or failure. Showing high task failure rates at least moves the discussion from whether there is a problem to what is causing the problem. Completion rates are the outcome (y) and design problems are the causes (x). You also need to use confidence intervals with completion rates. For example, if 1 out of 5 users were able to complete a task then you can be virtually certain fewer than 70% of all users will be able to. Use the Completion Rate Calculator to compute the interval.

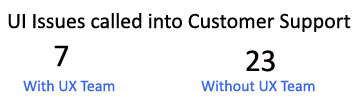

- Keep track of your past data and use it for comparisons: Few things provide more meaning to your data that showing how well a test is compared to past tests. Is the number of usability problems high or low relative to tests conducted on similar products or at similar design stages? What is the typical number of severe problems detected in early design versus late design? Keeping track of your data provides great benchmarks and can be an excellent source for ROI. How much are early efforts in design paying off? Can you compare that to other applications that didn’t have the benefit of early iterative testing?

Get started with the Quantitative Starter Package Today.