As you approach a research project, you are often faced with choices about different methods and concepts.

As you approach a research project, you are often faced with choices about different methods and concepts.

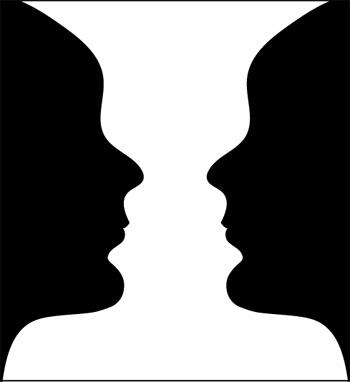

While you consider your options among methods, it may be that opposing methods actually offer a complementary rather than contradictory view of the user experience.

The following pairs of essential concepts are often portrayed as mutually exclusive while, in fact, the elements of each pair complement each other.

Understanding the different but often complementary nature of these paired concepts can help improve your research.

1. Inspection Methods and Usability Testing

Inspection methods involve having an interface expert (who, ideally, is also an expert in the domain) evaluate an interface, often against a set of guidelines (called heuristics).

Usability testing involves actually observing users as they engage with an interface to accomplish tasks. Each method generates lists of problems and insights. While only usability testing generates performance metrics, such as task-completion rates or task time, we’ve found that inspection methods uncover around 30% of the issues uncovered in usability tests.

There was a big debate in the 1990’s around how effective inspection methods are compared to usability testing in generating the most usability issues. The seminal paper, Damaged Merchandise, provides a good overview of that debate. In practice, it should not be inspection methods OR usability testing, but rather inspection methods AND usability testing.

2. Analytic and Empirical Methods

Analytic methods are those that don’t involve observing and collecting data directly from participants. These include Heuristic Evaluations, Cognitive Walkthroughs and Keystroke Level Modeling (KLM).

Analytic methods are particularly helpful when:

- it’s difficult to recruit participants

- you don’t want to subject participants to obvious usability problems that prevent them from uncovering other issues

- you want to estimate the error free task time of skilled users

Empirical methods, such as usability testing, surveying, and customer interviews, do involve observing and collecting data directly from participants. They’re essential if you need to make definitive statements about actual user performance.

Good research involves a mix of analytic and empirical methods.

3. Qualitative and Quantitative Analysis

Qualitative research involves collecting and analyzing primarily nonnumeric data from word-, picture-, and action-oriented activities. Quantitative analysis, of course, involves numbers or things that can be converted to numbers. These two analysis types aren’t opposites: every quantitative measure comes first from a qualitative observation, underscoring their complementary nature.

A common misconception holds that small sample sizes mean qualitative methods and large sample sizes mean quantitative methods; but this isn’t necessarily true. We may need to quantify behaviors with small sample sizes, and we may need to note and code behaviors with large sample sizes.

To describe and improve the user experience, you need quantitative methods to describe frequency and qualitative methods to understand the why.

4. Summative and Formative Evaluation

Summative evaluation establishes benchmarks: How usable? How satisfied? How loyal? Formative evaluation is primarily about finding and fixing interaction problems. In practice, most studies mix summative and formative approaches. Even when we conduct large-scale annual benchmarks for companies, we still diagnose the usability problems and make recommendations on what to fix. When we’re detecting problems with an interface, we include task- and test-level metrics that enable us to gauge whether the usability is improving in each phase.

So while it may be convenient to think of studies as either formative or summative, don’t let the lexicon prevent you from mixing these in one study.

5. Internal and External Validity

Research is often commissioned on a sample of customers to establish causation for the entire customer population. For example, you may want to know whether a new design yields higher conversion rates, or whether a new pricing model increases customer loyalty. When doing so, you have to know with certainty that the research conditions aren’t overtly impacted by nuisance variables that may cloud the causation results.

Examples of nuisance variables that may cloud the claims of causation include:

- Did users really prefer a new design, or was their choice skewed by the presentation order of the designs?

- Did the design increase revenue, or was revenue already increasing for other reasons?

- Do customers prefer more usable websites, or is their preference based on the visual appeal of the website?

When we account for and control nuisance variables in a study, we can increase our internal validity. When we can show that conditions in the study will mimic the real world situation of the entire customer population, with all its confounding variables, then we can increase our external validity.

Good research balances internal and external validity to be sure that our findings hold up in the lab and real world. It can be tricky to establish high external and internal validity in one study, so consider forming a hypothesis in one study that has, say, higher external validity, and then look to replicate the findings in a study with more internal validity.

6. Small and Large Samples

While your sample size can’t be at once small and large, the distinction between the two is fuzzy. The demarcation line for some falls at 10 or 30 and for others at 100. There is no magic or universal number at which a sample size goes from being small to being large. The mathematics behind most statistical computations requires at least two participants in a study. All things considered, a larger sample size implies more precise estimates and a greater ability to distinguish causal difference from random noise.

But quantitative methods aren’t limited to large sample sizes (whatever number that is), and formative methods aren’t limited to small sample sizes. Find more information on the optimal statistical techniques for large and small sample sizes in our book, Quantifying the User Experience.

While these six paired concepts often seem mutually exclusive, they often complement each other. Understand the distinctions between the two, consider how tenuous the distinctions can be, and then look for opportunities to use both.