Surveys are ubiquitous.

Surveys are ubiquitous.

We answer them and we develop them because they are an efficient means for collecting feedback and insight from current and prospective customers.

Here are a mix of ten tips, insights and philosophies to consider for your next survey.

- Have clear and testable hypotheses: Ask a realtor and they’ll tell you the secret to making money on the sale of your house is based more on what you pay for it than what you try and sell it for. In other words, when you care the most about profits and losses, it’s probably too late to do much about them. The same principle applies to surveys. The quality of the results of a survey is only as good as the effort you put into crafting the right questions and response options. And this happens days, weeks or months before you’re analyzing the responses.For an effective survey, you want to have clear hypotheses that you can test with the right questions and response options. For example, do users scan QR codes primarily for discounts or to learn more about the product? Do users refrain from using the website on their smartphones because of security concerns or because the screen is too small? These questions can be answered by asking the right questions. Contrast those questions with the more open-ended question: How do customers use their mobile phone when on our website?

- Don’t let questions reflect the business view: Surveys are usually commissioned when there is a clear and present need for a decision in a business or organization. While there should be good hypotheses and clear research questions that you want answered, the survey questions should not reflect the organizational plans. Users don’t think in terms of sales cycles, marketing funnels, value propositions, unique selling points, or content hierarchy. They think more in terms of necessary steps to obtain more stuff for less money with less effort. It’s our job to ask the questions in their language and to translate those into actions for the business.

- Don’t waste time on minutiae : Parkinsons’ law of triviality states that the time spent on any item will be in inverse proportion to the sum involved. In other words, we spend way too much time on the stuff that doesn’t matter. I’d add the Sauro corollary: The amount of time and number of changes spent on a survey element are inversely proportional to the impact of the element. Having clear wording and the right answer choices are important, but it’s easy to get carried away with arguing about things like age-brackets, salary amounts, question order, page breaks, required questions, odd or even scale points, number of scale points, colors, asterisks, and bolding.

- Think shorter: Gerry McGovern tells us that the worst way to design a website is to have five smart people in a room drinking lattes. I’d say the same principle applies to survey writing. The more caffeinated product managers get add to a survey, the longer and more complex the survey will be to build, respond to and analyze. This is largely the impetuous behind the Net Promoter Score’s popularity. Instead of launching a bloated survey with complex questions annually, it’s better to simply ask just a single question on whether or not customers would recommend a product, and then finding a way to do something about the problems that are uncovered.

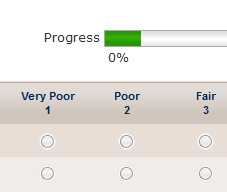

- Watch for non-mutual exclusivity: When creating response choices in which respondents are asked to pick only one choice, be sure each one really means one choice (mutually exclusive). For example, with age and income brackets, it’s easy to overlook overlapping categories like the example below:

Please select your age : 20-30

30-40

40-50

50+An example of non-mutually exclusive age groups. If a respondent is 30, they could pick one of the first two groups. It should read 20-30, 31-40, 41-50 and 51+. - Pretest, refine, soft-launch then test again: Peter Skillman’s Marshmallow Challenge showed that teams of kindergartners were better than MBA grads at constructing towers from spaghetti and marshmallows. The reason? MBA students relied on a single plan, whereas kindergartners found out what worked through trial and error. Instead of relying on a single grand planned survey, consider several iterations that collect data on a few questions. I’ve found that instead of waiting until the end to find that a question wasn’t helpful, redundant or misleading, a few smaller surveys can help identify problems before time runs out.

- Randomize : It’s one of the tenets of good scientific experimentation: randomize. In a recent survey we showed respondents two new homepage designs and asked which they preferred. Half the respondents received the old design on the left, and the other half on the right. It turned out that the design on the right was always preferred. For response options, there is a bias for choices placed first and to the left. Wherever you can, randomize to reduce these biases.

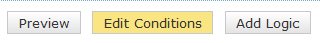

- Use conditional questions: Most survey software now allows you to set logic and conditionals to show only relevant questions to the respondents. There’s no point in asking people to respond to why they aren’t recommending their rental car company if they are recommending it. Conditionals can also be used to randomly assign different respondents different questions. This can be especially helpful if your survey is long and complicated. Instead of having arguments on whose question gets kicked off the island, randomly assign half the participants to receive half of those borderline questions.

- Ask open-ended and closed-ended questions: Open-ended response comments allow you to get what’s top of mind for respondents. Yes categorizing verbatim responses takes time but when it is done systematically it can be worth it. We often ask the open-ended questions in pretests to get the range of responses, and then ask closed-ended questions with finite responses when we feel like we’ve covered most choices. Or, we’ll ask an open-ended question first before planting ideas in users heads as to their preference or reason. It’s usually good practice to add an “other” option to closed ended questions, as there always seems to be options you haven’t offered.

- Focus more on who you are surveying than how many: As with sample sizes in general and survey sample sizes in particular, there’s a lot of misconceptions about what “small” and “large” sample sizes can tell you. There is a perception that a small sample size (which could be 100, 500 or 2000 depending on who you ask) isn’t “representative.” While representativeness is different from precision, the concern is that the results from smaller sample size are misleading, biased or just plain useless.While estimates of customer attitudes will be more precise with larger sample sizes, the averages, proportions and frequencies that you obtain from, say, 30 users are surprisingly stable. If at a sample size of 30, 80% of users report scanning QR codes on their mobile phone for discounts, it’s highly improbable that after 300 respondents, the proportion would fall below 50%. The inaccurateness of the response is much more likely to come from surveying the wrong people rather than not enough of the right people.