Is there such thing as usability? This might sound like a silly question considering the industry around usability testing and user experience consulting (not to mention this website). But you can’t touch usability and there is no usability thermometer to measure its presence or absence. While we can talk about usability and know it when we see it (or really, know it when we don’t see it), what data is there that shows usability exists?

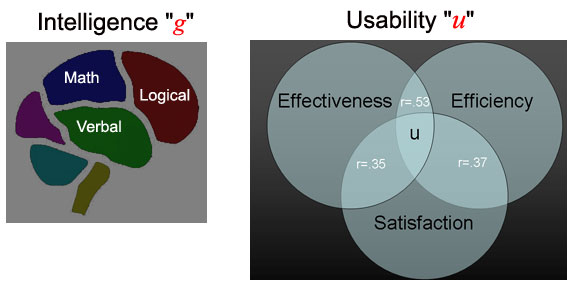

It turns out there is surprisingly little research on the existence of usability. The international standards that define usability (ISO 9241-p11 and now ISO 13407) don’t offer evidence for its existence, just guidance on what we mean when we say usability. Usability is an abstract construct like intelligence. There is no intelligence thermometer or place in the brain where we can measure intelligence, yet we speak about it and many important decisions are made in the name of IQ. Solving difficult math problems, having a large vocabulary and performing other mental calculations are what many people would consider signs of high-intelligence. Intelligence then is measured indirectly using verbal and mathematical tests. It has been found that people who score highly on say verbal reasoning also tend to score highly on mathematical abilities and there is evidence for a general intelligence, called “g”. There is much controversy about whether we should combine tests of differing mental ability or only speak in terms of multiple intelligences. Controversy aside, the concept is useful for other abstractions such as usability.

Over the years I’ve been collecting data from usability tests on websites, desktop-applications, physical products, cell-phones and Interactive Voice Response systems (IVRs). I’m up to around 120 such tests, each of which contains some quantified aspect of usability–usually completion rates and task times, to a lesser extent measures of satisfaction and error-rates. There are between 5 and 350 users in each test. If usability does exist, then this would be a good place to find it.

Along with Jim Lewis, we employed a technique called Factor Analysis (the same technique originally used to ‘discover’ g) and we found a pattern in the data that suggests there is evidence for a general construct of usability (which we call ‘u’). We found the general pattern that the different usability metrics (task-times, completion rates, satisfaction scores and errors) correlated with each other. For example, users who take less time and commit fewer errors in completing tasks tend to record higher satisfaction scores.

We found the general construct of usability has an underlying objective and subjective factor. The prototypical effectiveness and efficiency metrics of task times, completion rates and errors load on the “objective factor” and post-task satisfaction scores and post-test satisfaction scores load on the “subjective factor.” What does that mean exactly? Well, like intelligence, we can say there is evidence for an overall general construct of usability but it also has meaningful sub-constructs. Just as someone can excel mathematically but not verbally, interfaces can be efficient but users might not like them much . And just to end with one of those standardized testing analogies: math ability : objective factor :: verbal ability : subjective factor.