Completion rates are the gateway metric.

Completion rates are the gateway metric.

If users can’t complete tasks on a website, not much else matters.

The only thing worse than users failing a task is users failing a task and thinking they’ve completed it successfully. This is a disaster.

The term was made popular by Gerry McGovern and disasters are anathema to websites and software.

Measuring Disasters

Have you ever bought the wrong product or found out later you got the wrong information like the value of a car, the shipping costs or the delivery time? If so then you know how both frustrating and inconvenient it can be.

The most effective way of measuring disasters is to collect binary completion rates (pass and fail) and ask users how confident they were they completed the task successfully. I use a single 7 point item to measure confidence (1= Not at all confident and 7 = Extremely Confident) but you could use a 5, 9 or 11 point scale too.

Disasters are when users fail the task and yet report they are extremely confident they completed it successfully (e.g. 7’s on a 7 point scale).

How Common are Disasters?

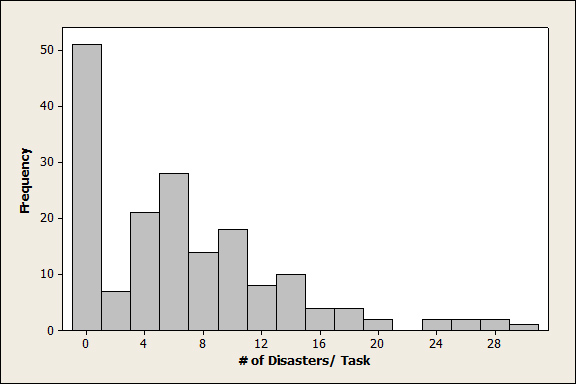

Just how often do such disasters occur? Well it certainly depends on the task and interface. I’ve used this method for several years now on software and websites. Across 174 tasks, 1200 users and 14 different websites and consumer software products the median disaster rate is 5%. I’ve seen as few as 0 disasters and as many as 30%. The distribution of disasters by task is shown in the histogram below.

Figure 1: Frequency of disasters by task. Many tasks have no disaster experiences while a few have above 25%.

Task-Level Disasters

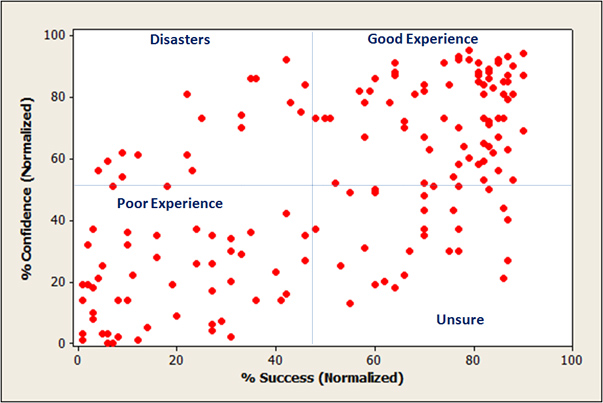

In addition to looking at disasters by the individual, we can also look at task-level experiences. By plotting success and confidence we can identify where users are misinterpreting cues and leading them to believe tasks were done correctly—when they weren’t. The graph below shows the 174 tasks graphed by success and confidence.

Figure 2: Task success and task confidence for 174 tasks across consumer software and websites.

Technical Note: The confidence score was created by dividing the mean confidence score by task and dividing it by the maximum score. So a mean score of 6 on a 7 point scale becomes 86%. Confidence scores were a bit inflated (as are most rating scales) as the average confidence by task was 74%. I transformed these percent confident scores so 74% became the center point. The average task completion rate was also not 50%, but 66% (a bit lower than the 78% from the larger sample). I also transformed completion rates so 66% became “average” at 50%. These transformations tend to stretch the tasks out so we can better discriminate between good, mediocre and poor experiences.

The correlation between task completion and confidence is r = .67 and this relationship can be seen as the diagonal spread of the data. The bulk of the tasks fall within the upper right quadrant and lower left quadrants which I call good and poor experiences. Here users are completing tasks and know it or are failing tasks and generally know it.

Disasters fall in the upper left quadrant where task success is below average but confidence is above average. Task level disasters aren’t rampant, but the 23 in this sample represent about 13% of the task experiences. The lower right quadrant shows above average completion rates and below average confidence and I call these “unsure.” These tasks may fall below the radar because the completion rates are high, but the low confidence scores suggest problems are lurking and there’s room for improvement.

Task failure is bad, but when users think they have the right information or did something they really didn’t (like voting for the wrong candidate), then the user experience goes from bad to worse. Tracking both task success and confidence can help identify these disasters early and hopefully prevent further damage.