User research is not a hard science.

User research is not a hard science.

But like all behavioral sciences, there are principles, best-practices, rules and recommendations.

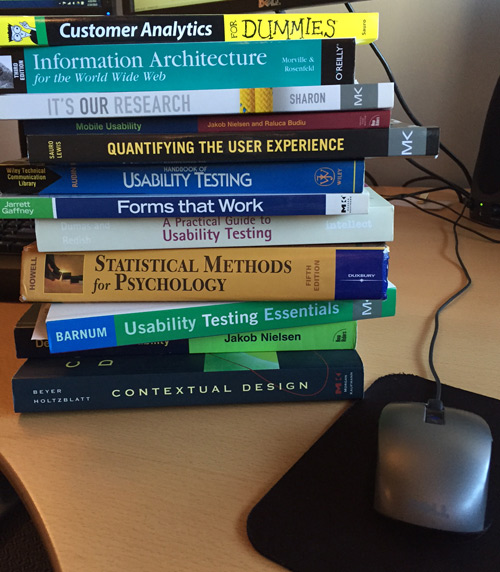

Across dozens of tutorials, five books, many articles and blogs, boot camps, and discussions with both seasoned and new UX professionals, I’ve noticed a number of common problems and themes related to measuring the customer experience.

Here’s a list of 25 rules and recommendations that people tend to find most helpful.

- Mix methods, metrics, and tools. Don’t always use the same ones. And use them in combination: think and instead of or.

- It’s much easier to prove that something is not usable than to prove that it is usable. Small samples can quickly show that an experience is terrible. However, just because a few participants don’t have a problem doesn’t mean the experience is great.

- Preference does not equal performance, but the two often go hand in hand. Measure what users do, ask them what they prefer, and then reconcile any difference.

- No single sample size (not even five) works for every study.

- If a problem affects 100% of your user population, testing with one user will expose that problem.

- Randomize. Wherever you can, alternate the order of tasks, products, questions, and response options. Randomization can often minimize even major flaws in research designs.

- To cut your margin of error in half, quadruple your sample size.

- A 5-point rating scale is usually better than a 3-point scale, a 7-point scale is slightly better than a 5-point scale, and an 11-point scale is ever-so-slightly better than a 7-point scale. Increasing a scale to over 11 points adds little value, especially if you’re using more than one item in a questionnaire, as with the SUS or SUPR-Q.

- The number of scale points doesn’t matter much. If you find yourself arguing about the number of points in a response scale, you’ve probably already spent too much time on the topic. Ditto for arguments about having neutral responses and whether the positive responses should be on the left or right. Pick a scale and stick with it over time.

- No one in a company owns the user data. Everyone owns the user data. Everyone can contribute, everyone is responsible and everyone should care.

- While Mad Men–style one-way mirrors in labs may seem cool and may even impress customers, digital technology has largely replaced the need for noisy, dark, cramped observation rooms next to the usability lab.

- Know who your users are. While personas serve a purpose, you don’t need fancy, life-size cardboard cut-outs. You do need to determine which behaviors, goals, and attitudes define the people who use your website or software and who buys your products—and choose your test participants accordingly.

- Know what users are trying to do, and you’ll avoid one of the biggest disconnects we see. Often, product stakeholders are sure that users are doing one thing while the data reveals that they’re doing something else. Conduct a top-task analysis; it’s one of the best bangs for your research buck.

- Many design differences are not noticeable to users. When very large sample sizes detect no differences in attitudes and behaviors, it means that the design changes don’t greatly impact users’ goals.

- What users say and what they do aren’t always the same, but they often are. Measure both.

- Usability doesn’t exist. It’s a reified construct. There’s no usability yardstick. We rely instead on the outcomes of poor usability: longer times, more failed tasks, and low ratings. We should however aim for the yardstick by continually improving our measurement methods.

- Usability isn’t the only thing users care about on software and websites. Utility, appearance, credibility, and accessibility are among the main factors you must consider.

- Measures of usability are not invariant. They change based on the tasks, users, evaluators, and other variables.

- Be open-minded. Don’t dismiss new tools, techniques, or metrics without trying them out.

- Think lean. By the time a report has been revised and presented, you have to know what the problems are and, ideally, how to solve them. While the 1980s were a great decade for music and big hair, be sure your reports aren’t stuck there.

- Jakob Nielsen and Jared Spool can both be right. Don’t get caught up in picking one UX celebrity over another. While there are different perspectives on how to conduct and improve user research, there’s much more agreement than disagreement.

- There’s no killer app to solve all your usability problems. Don’t be wedded to any tool.

- What you see is not always what you get. Eye-trackers are cool and powerful tools. Executives love the heat maps. But just because participants look at a design element or copy, it doesn’t mean they perceive, understand, or remember it. Many research questions can be answered without them.

- What you get is what you see. Different evaluators tend to see different usability problems—even if they watch the same videos of the same users!

- There are many paths up the same mountain. Usability is less about generating consistent results between evaluators than it is about finding and fixing problems.