Many software companies track and use the Net Promoter Score as a gauge of customer loyalty.

Many software companies track and use the Net Promoter Score as a gauge of customer loyalty.

Positive word of mouth is a critical driver of future growth. If you have a usable product, customers will tell their friends about the positive experience.

And alternatively, a poor user experience will lead customers to tell their friends how unusable a product is. But what are good Net Promoter and Usability Scores?

Consumer & Productivity Software Benchmark Survey

Over the past six months I conducted the largest survey of attitudes about usability and loyalty for the consumer and productivity software industry. I received 1726 responses from current users of 17 of the best known names in software. The products are:

- ACT!

- AutoCAD

- Dreamweaver

- Excel

- Drop Box

- Flash

- iTunes

- McAfee Anti-Virus

- Mint.com

- Norton Anti-Virus

- Peachtree Accounting

- Photoshop

- PowerPoint

- QuickBooks

- Quicken

- TurboTax

- Word

Users came from 75 different countries with the bulk from North America (73%) , Europe (14%) , Asia (10%), South America (2%) and Africa (1%). A bit over half were Male (57%) with an average age of 32. These users were asked a number of loyalty and standardized usability questions including the Likelihood to Recommend question and the 10 item System Usability Scale (SUS).

What’s a good Net Promoter Score?

The Net Promoter Score is calculated using the 11-point Likelihood to recommend question (0 to 10). It is computed by subtracting the percent of Detractors (0-6) from the percent of Promoters (9-10).

Across all 17 products the average Net Promoter Score is a 21% with a range of -26% to 56%. TurboTax gets the award for the highest Net Promoter Score. A full list of product Net Promoter Scores can be purchased in the detailed benchmark report.

The importance of product-level benchmarks

The only other Net Promoter Benchmarks that exist are at the company level. While this is important and helpful for marketing and branding efforts, it’s difficult to isolate how much a product or group of products are contributing to loyalty.

Net Promoter Scores can vary substantially within the same company. While the products in the Microsoft Office Suite varied by only 7 percentage points, some products from Intuit differed by a substantial 50 percentage points. Isolating your benchmark to the relevant product allows you to hone in on what needs improving, especially in cases where the consumer may recognize the product but not the company that makes it.

Did you recommend (Retro-Recommend Rate)

In addition to asking customers how likely they are to recommend (in the future) I asked whether they actually recommended in the past 12 months. Humans are notorious for being poor predictors of their future behavior, so it’s a nice supplement to the Likelihood to Recommend question.

Across all products the average retro-recommend rate was 45%. Meaning for the average product, around half the users said they did refer a friend or a colleague to the product. This did vary across products. Drop Box has 72% of current customer reporting a retro-recommendation whereas Norton Anti-Virus has 29%.

Product Referral Rates

Another great measure of customer loyalty is to assess what percent of customers were themselves recommended to the product (a referral-rate). Again, this is another way to gauge word of mouth as a supplement to the retro recommend rate and Likelihood to Recommend rate.

Across all products the average referral rate was a 47%. Meaning for the average product, around half the users said they were referred by a friend or a colleague to the product. The referrals for a product come from a high of 70% for PeachTree Accounting and the fewest for Microsoft Word at 32%.

What’s a good Usability Score ?

I used the industry standard System Usability Scale (SUS) to compute the perceived ease of use of the 17 products. SUS is a 10 item questionnaire with possible scores ranging from 0 to 100. The average SUS score from over 500 products is a 68. The average SUS score from this group is a 73 with a minimum score of 63 and high score of 84.

I converted the raw SUS scores to percentile ranks and found that the average score translates into a 63%–meaning this group of products has higher perceived usability than 63% of all products tested.

This makes sense. We’d expect a wide selection of highly used software products to be easier to use than say less used and complex business to business software. The lowest and highest score translate into percentile ranks of 36% and 86% respectively.

Learnability

Items 4 and 10 from the System Usability Scale provide a measure of learnability. iTunes, TurboTax and Mint.com lead the way for the easiest products to learn. Not surprisingly, the products that time to master (and often require special skills) have the lowest learnability scores : Photoshop, Dreamweaver and AutoCAD.

Full SUS scores and learnability scores by product are provided in the benchmark report.

Usability and Net Promoter

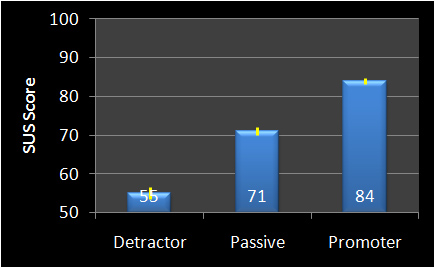

As I reported last year, there is a strong association between usability and loyalty. In general, if a product has a higher SUS score, people are more likely recommending it. The graph below shows the SUS scores for detractors, passives and promoters.

This is consistent with last year’s data and shows that SUS scores above 80 or so go along with positive promotion. SUS scores below 60 represent a less usable experience and lead to more negative word of mouth.

Key Drivers of Customer Loyalty

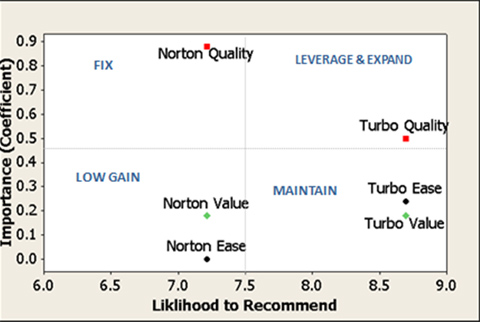

In addition to usability, I also asked questions about how customers perceive the value they get for the price and the quality of the product. I then used these questions to help explain the variation in Net Promoter Scores by creating a key-driver analysis. This is essentially multiple regression analysis crossed with average responses. It gives a two dimensional look of both importance and the level of satisfaction with the three attributes of usability, quality and value. For example, in the key-driver chart below I’ve got TurboTax and Norton Anti-Virus.

We can see that TurboTax perceived Quality has a .5 importance rating (this is the coefficient from the regression analysis). This means a 1 point increase in perceived quality (on the same 0-10 scale) would increase the likelihood to recommend by .5 of a point. Put another way, it takes a 2 point increase or decrease in Quality to move the LTR score 1 point.

The ease of use rating for TurboTax is right around a .25. We can interpret this to mean that the quality of TurboTax is seen as about twice as important as its ease of use. Increases or decreases in quality would have a more substantial impact on the Net Promoter Score for this product than the value (price paid) and ease of use. In other words, users care most about getting taxes filed accurately (quality), somewhat less about how easy to use it is, and to a lesser extent they care about the price of the product.

The story for Norton Anti-virus is a bit different. Here again we see quality is very important but the current rating suggest users are concerned about it. Ease of use doesn’t appear to impact attitudes about loyalty. While value has about the same importance as TurboTax’s value, users perceive the value of Norton as substantially lower–suggesting they don’t think it’s a good value for the price. A good place to start improving would be quality where a 1 point increase in the Quality score would result in a .9 point increase in the Likelihood to Recommend score (and therefore increase the Net Promoter Score).

A deeper dive on why users are rating the quality lower would identify areas of improvement. An alternate and possibly easier strategy may be to lower the price or explore better pricing options to deliver value. However, the value ratings of McAffee Anti Virus (not-shown) have even lower value ratings and higher importance scores. It may be that customers don’t like paying much if anything for anti-virus software relative to other software products. In the industry, Norton may be priced competitively. A key driver analysis for all products and attributes is included in the benchmark report.

Third party benchmarks for loyalty and usability are an excellent way to provide meaning to all those numbers on corporate dashboards. It’s a lot easier to know how well your product is doing if you know where it stands relative to the competition or the industry average.