Most companies have more bug lists and requests for product features than they can realistically address.

Most companies have more bug lists and requests for product features than they can realistically address.

In an earlier post, I present ways to help you prioritize those features; now let’s figure out where to start.

This post describes an approach that works in many situations, beginning with a survey of your customers or prospects, or a representative set thereof, with the following objectives:

- Measure customer attitudes.

- Determine the top tasks.

- Evaluate customers’ satisfaction with those top tasks.

- Analyze the key drivers.

- Create a scorecard.

1. Measure Customer Attitudes

Have a representative set of your customers and/or prospects take a short survey. Use software from MeasuringU, SurveyAnalytics, or Survey Monkey that enables conditionals and randomized tasks.

In the first part of the survey, have participants rate their attitudes toward a few overall product or company metrics, such as:

Loyalty: The Net Promoter Score or likelihood to repurchase.

Usability: A measure like SUS, SUPR-Q, or even a single question about the product’s ease of use.

Satisfaction: How satisfied customers are with the product, the value they receive and the reliability and quality of the product.

You can get more detailed metrics by asking about attitudes toward support, delivery, or the sales process, but keep it short—don’t overload the participants early with too many questions. All you need at this stage is an assessment of how well customers’ expectations are being met; you’ll have the opportunity later to go into more detail.

2. Determine the Top Tasks

Software and websites are subject to feature creep, which can eventually undermine your objective—which is to draw customers to your product or your site—and your customers’ objective—which is to accomplish tasks and goals easily and quickly. Using information from feature lists, support logs, contextual inquires, interviews, and your own product experience, come up with an exhaustive list of the tasks your product or site enables your customers to do. Consider including tasks that are not yet enabled by the software.

Present these tasks in one survey, in randomized order, using customer-centered, rather than company-internal, language.

Notes:

– For products with hundreds or thousands of tasks, group the tasks. If you’re testing something like accounting software (QuickBooks), for example, list the top tasks in the Accounts Payable section, Reports Section, Account Registers, or Payroll. You can also sort tasks by granularity; high-level, such as creating a 1099, to low-level, such as computing taxes for a vendor’s 1099.

– You can live with a little redundancy.

Have your customers pick the tasks they most want to accomplish. Customers won’t be able to read every single task but will instead scan the list and look for the things that come to mind when they think about functional areas (like reports or payroll). Allow participants to select only 3-5 tasks—we want to identify the critical few tasks that your website or product should do well, and we want to ignore, for the purposes of this survey, the trivial many tasks.

3. Evaluate Customers’ Satisfaction with Those Top Tasks

Once customers have selected what’s important for them, have the survey software “pipe in” the tasks they selected on subsequent pages. This keeps the customers again focused only on what’s most important and reduces the effort required of the participants.

Have the customers rate how satisfied they are with the product or website’s ability to deliver on those top tasks selected. You can keep it generic or more specific —or both—to rate usability. Again, keep it short to prevent drop out. Using a more generic measure like satisfaction enables you to capture issues like latency (slow loading pages) or quality (broken links, reports that don’t work, etc.) as well as usability. But this higher-level satisfaction rating makes it harder to pinpoint what affects satisfaction. Ask participants to describe briefly why they rate a task low.

While these surveys aren’t intended to diagnose problems, they can be an easy source for collecting low-hanging fruit; if customers’ expectations are not being met, you want to know. Once the data is collected, identify the top tasks and their average satisfaction ratings. You will see the classic Pareto distribution showing a few tasks will account for the majority of votes from customers.

4. Analyze the Key Drivers

Using the key customer variables (step 1 from above, for example, we selected overall satisfaction, loyalty, and usability), identify the areas with the biggest impact on attitudes. Participants’ open-ended comments often identify the main causes of their pain and are an easy place to start.

Open-ended comments only get you so far because customers don’t or can’t always articulate subtle, yet important differences in how they feel. A key-driver analysis can detect small differences in importance between variables. This technique uses multiple regression to determine which tasks, statistically, have more influence on customers’ satisfaction, their likelihood to recommend, or your product usability. This technique may indicate that some areas of low satisfaction aren’t the major reason why customers aren’t recommending or aren’t satisfied with the product.

5. Create a Scorecard

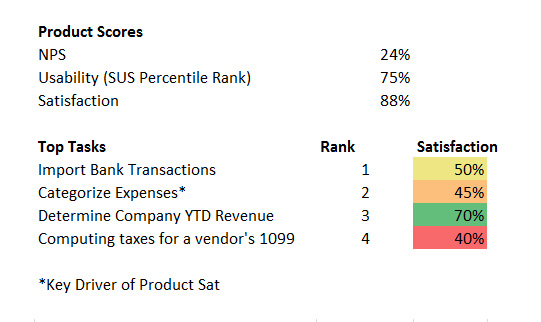

With the top tasks identified and their corresponding satisfaction ratings averaged, list the tasks, in order of importance (use any meaningful ranking system—a grade, a color, or a rank) and put the list where stakeholders will see it. Stakeholders can now see that customer satisfaction is “in the red” or “earning a C-.” Figure 1 below shows an example of a hypothetical product scorecard with this information. Flag the tasks that were identified as key drivers. Use this scorecard to plan and prioritize and as a baseline to gauge future changes against.

Figure 1: An example hypothetical scorecard, including the overall product metrics, top tasks, and satisfaction by tasks with key drivers identified.

Conclusion

Using this framework, you can quickly see, at a high level, the following:

- Customers’ overall product satisfaction

- Which tasks are most important to customers

- What areas have the highest and lowest satisfaction

- The key drivers of overall satisfaction

- Where to focus your efforts

This is a starting point. Use the high-level scorecard to focus initiatives, like conducting usability tests, customer interviews, or investing in more development to fix problems or add features that customers deem essential.