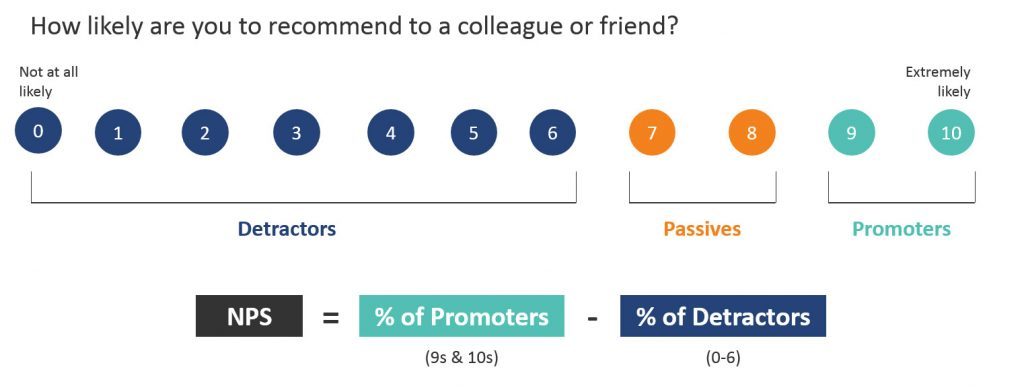

The Net Promoter Score introduced a new language of loyalty.

The Net Promoter Score introduced a new language of loyalty.

At center stage are the promoters and detractors.

These designations are given to respondents who answer the How Likely Are You to Recommend (LTR) question as shown below.

But what is the justification for the designations?

Were they just arbitrarily created?

Do they just sound good for executives? How much faith should we put in them?

What Makes Someone a Detractor?

In The Ultimate Question, Reichheld reports that in analyzing several thousand comments, 80% of the negative word-of-mouth comments came from those who responded from 0 to 6 on the Likelihood-to-Recommend item (pg. 30).

In our independent analysis, we were able to corroborate this finding. We found 90% of negative comments came from those who gave 0 to 6 on the 11-point scale (detractors).

Therefore, we found good evidence to support the idea that responses of 0 to 6 are more likely to say bad things about a company and account for the vast majority of negative comments.

What Makes Someone a Promoter?

Reichheld further claims that 80%+ of customer referrals come from promoters. This sort of data can be collected from internal company records—for example, asking how customers heard about the company and associating that to the purchase.

It would be good to corroborate this finding, but we aren’t privy to internal company records. We can look at a similar measure, the reported recommendation rate, across many companies. For example, in our earlier analysis, we examined two datasets: consumer software and a mix of most recently recommended products. We found:

Consumer software: 64% of consumer software customers who recommended a product were promoters.

Most recent recommendation: 69% of consumers who reported on their most recent recommended product or service were promoters.

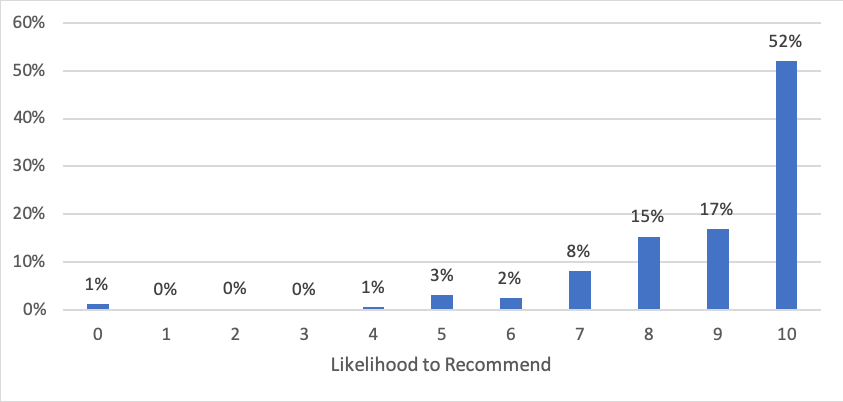

Digging into the second data set, Figure 1 shows the distribution of the responses on the 11-point LTR question for the 2,672 people who recalled their most recently recommended company.

Figure 1: Distribution of likelihood to recommend from 2,672 US respondents who recently recommended a company or product/service.

Across these two datasets, we do find corroborating evidence that the promoters are most likely to recommend. Taking an average of the two datasets reveals that around 67% of recommenders are promoters. This is lower than the 80% that Reichheld cited but still a substantial number. To get to 80%+ of recommendations we’d need to include the 8s (84%).

The very high percentage of 10s who recommended (52% in Figure 1) is more evidence that extreme attitude responses may be a better predictor of behavior.

While we did find some corroboration to Reichheld’s data, our approach has two methodological drawbacks. First, we used only one point in time to collect past recommendations and future intent to recommend. It could be that respondents who currently rate highly on their likelihood to recommend may also overstate their past recommendations, thus inflating these percentages. Second, we used the recommend rate, which is a bit different than actual referral rates. A referral from a company would be an actual purchase made by someone who was recommended/referred to the company. Just because you recommend a product to a friend doesn’t mean they will actually follow through on the recommendation and make a purchase and that may account for some of the difference.

One way to account for the problem with potential bias from using one timepoint to collect past and future recommend intentions is to conduct a longitudinal study. This will allow us to find out what percent of people who say they will recommend actually do.

Longitudinal Study of Promoter Recommendations

In November 2018 we asked 6,026 participants from an online US panel to rate their likelihood to recommend several common brands, their most recent purchase (n = 4,686), and their most recently recommended company/product (n = 2,763) using the 11-point Likelihood to Recommend item. The common brands shown to participants were

- Amazon

- Best Buy

- Apple

- eBay

- Target

- Walmart

- Southwest Airlines

- Budget Rent a Car

- Enterprise Rent-a-Car

We rotated the brands so not all participants saw each one. This gave us between 502 and 1,027 likelihood-to-recommend scores per brand.

Examples of companies people mentioned purchasing from or recommending include

- Sportsman’s Warehouse

- WinCo Foods

- Shoes.com

- Whit’s Frozen Custard

- Kroger

- Steam

Follow-up 30 to 90 Days Later

We then invited all respondents to participate in a follow-up survey until we collected 1,160 responses at 30 days (n = 560), 60 days (n = 300), and 90 days (n = 300), which took place December 2018, January 2019, and February 2019.

We presented respondents with a similar list of companies rated in the November survey and asked them to select which companies, if any, they had recommended to others in the prior 30, 60, or 90 days (based on the follow-up period).

This list of companies presented also included the company, product, or service the respondents had reported purchasing or recommending from the original survey (using an open-text field and cleaned up grammatically), shown in randomized order. If a respondent mentioned one of the companies on our brand list, we presented an alternate brand to avoid listing their stated company twice.

Participants were therefore asked how likely they are to recommend companies and brands they may or may not have done business with (e.g., Southwest, Budget, or eBay) and by definition, companies they have a relationship with (i.e., most recent purchase and most recent recommendation). The most recent recommendation can be seen as a sort of second recommendation since the original survey.

Recommendations after Recent Purchases and Recommendations

First, we analyzed respondents’ most recent purchase or most recently recommended product. Table 1 shows the number of responses, the number that recommended, the percent that recommended, and the percent of those recommendations that came from promoters at each time interval (and in aggregate).

After 90 days, we had 406 responses for the most recently recommended product (31 responses collected at 30 days, 106 at 60 days, and 269 at 90 days). Of these, 151 (37%) reported recommending the company/product to a friend or colleague at either 30, 60, or 90 days. Also, after 90 days we had 946 responses for the most recently purchased product, of which 471 (50%) reported recommending it to a friend or colleague during the 30, 60, or 90-day interval after our initial survey.

The far right column of Table 1 shows the percent of recommendations that came from promoters. For example, after 30 days, 20 out of 31 people reported recommending their previously recommended company again. Of those 20 recommendations, 17 (85%) came from promoters. At 90 days, 94 out of 269 respondents recommended their most recent company and 77% of those recommendations came from promoters.

| Type | Duration | # of Responses | Num Recommended | % Recommend | % of Rec from Promoters |

|---|---|---|---|---|---|

| Recent Rec. | 30 | 31 | 20 | 65% | 85% |

| Recent Rec. | 60 | 106 | 37 | 35% | 73% |

| Recent Rec. | 90 | 269 | 94 | 35% | 77% |

| Recent Rec. | Combined | 406 | 151 | 37% | 77% |

| Recent Purchase | 30 | 534 | 295 | 55% | 60% |

| Recent Purchase | 60 | 252 | 126 | 50% | 57% |

| Recent Purchase | 90 | 160 | 50 | 31% | 66% |

| Recent Purchase | Combined | 946 | 471 | 50% | 60% |

Table 1: Percent of recommendations coming from promoters for the most recently recommended company/product or the most recently recommended purchase at 30, 60, and 90 days.

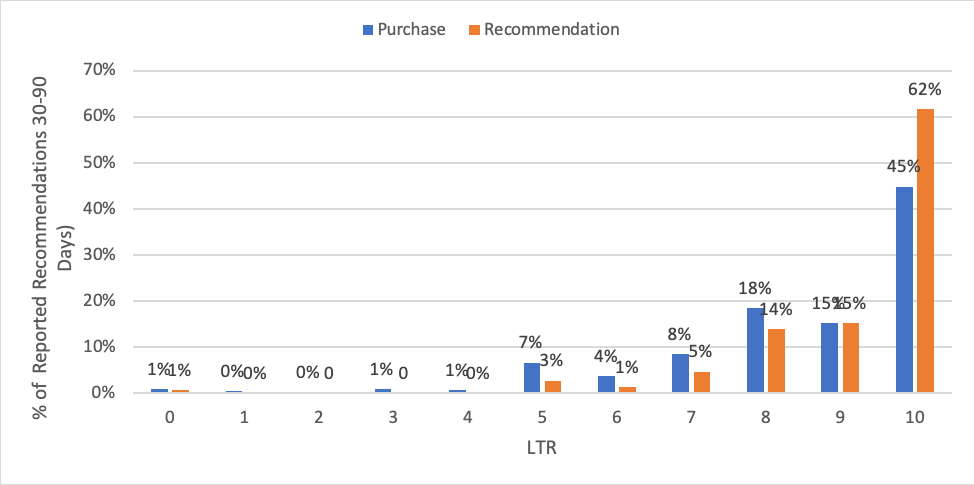

Aggregating across the 90 days we found that promoters account for 77% of recommendations from the most recently recommended product and 60% for the most recent purchase. This distribution can be seen in Figure 2.

Figure 2: Percent of all reported recommendations at 30, 60, and 90 days for respondents most recent purchased and recommended product.

Figure 2 shows that most self-reported recommendations do indeed come from promoters (9s and 10s) although the proportion coming from 8s (passives) is indistinguishable from the percentage coming from 9s (and is nominally higher for recent purchases). Similar to Figure 1, to account for 80%+ of recommendations, we’d need to include 8s.

We also again see 10s accounting for a disproportionate amount of the recommendations, continuing to suggest the extreme responders are a better predictor of behavior.

The percentage of recommendations coming from promoters are similar, albeit lower (especially the most recent purchase recommendations), to Reichheld’s reported 80% of recommendations coming from promoters. This difference could be that we’re using only data from a 30, 60, or 90-day window; a larger time period may likely increase the number of recommendations. Research by Kumar et al. found that the optimum referral time was 90 days for telecom companies and 180 days for financial services.

Interestingly though, in looking at Table 1, a similar but often higher percentage of respondents report recommending at 30 days than at 60 or 90 days. This could be because respondents better remember recommendations for a shorter time period or it’s a seasonal anomaly. The time period we collected data for (the first 30 days included Christmas) may also be contributing to the higher short-term rates. We’re also relying on self-reported recommendations, which also may not be as accurate as actually tracking referrals—something a future analysis can explore.

Recommendations from Specified Brands

Next, we looked at the recommend rate for the rotating list of brands we specified. A substantial number of respondents reported having not purchased from several brands so asking about recommendations seemed less relevant. To identify customer relationships, we sent a new survey to 500 of the 1,160 respondents in February 2019 to ask them to specify how many times (or at all) they purchased from the specific brand list in 2018 and how much they estimated they spent.

We therefore focused our analysis on this subset of respondents who reported having made a purchase from the brand in the last year. This reduced the sample size because we rotated out brands at both the initial timepoint when we asked how likely they would be to recommend (November 2018) and then asked only a subset at 30, 60, and 90 days whether they recommended.

Table 2 shows a breakdown of the responses by brand (multiple responses allowed per respondent). For example, 475 people were asked the LTR for eBay in Nov 2018 but only 142 were customers (those who reported making a purchase in the prior year). Of the 142 customers who answered the LTR, 29 (20%) reported recommending the company in the prior period. Of these 29 recommenders, 14 (48%) were promoters.

Table 2 shows that 51% of all recommendations came from promoters. This percentage is lower than the 60% we found with the most recent purchase, lower than the 80% Reichheld referral rate, and lower than the 77% for respondents most recently recommended brand.

There is variation across the brands, with a high of 57% of recommendations coming from Amazon promoters and a low of 0% for Budget. The low percentages for Southwest and Budget are likely a consequence of very few overall recommendations for these brands in this sample. For example, Budget had only two customers who recommended; both of them were not promoters. With such small numbers of recommendations, wide swings in percentages are expected by brand but the aggregate gives a more stable estimate. A future analysis can include a larger number of customers.

| # of Customers | # of Cust. That Recommended | % of All Customer That Recommended | % Recommendations from Promoters (Cust Only) | |

|---|---|---|---|---|

| Amazon | 278 | 132 | 47% | 57% |

| Best Buy | 117 | 17 | 15% | 41% |

| Apple | 75 | 16 | 21% | 50% |

| eBay | 142 | 29 | 20% | 48% |

| Target | 196 | 63 | 32% | 51% |

| Walmart | 234 | 38 | 16% | 53% |

| Southwest | 37 | 16 | 43% | 25% |

| Budget | 8 | 2 | 25% | 0% |

| Enterprise | 15 | 2 | 13% | 50% |

| Aggregated | 1102 | 315 | 28% | 51% |

Table 2: Percent of recommendations from promoters at either 30, 60, or 90 days for selected brands for customers only.

Promoters’ Share of Recommendations

So, are promoters more likely to recommend? The answer is a clear yes. Table 3 shows the percent of recommendations from detractors, passives, and promoters for respondents most recently recommended product, most recent purchase, and across common brands. Promoters account for between 2.6 times (51% vs. 19%) and 16 times (77% vs. 5%) as many recommendations as detractors.

| % Recommendations from: | Detractor | Passive | Promoter | 10's | 0-4s |

|---|---|---|---|---|---|

| Recent Recommendation | 5% | 19% | 77% | 62% | 1% |

| Recent Purchase | 13% | 27% | 60% | 45% | 3% |

| Selected Brands | 19% | 30% | 51% | 30% | 7% |

Table 3: Percent of recommendations for detractors, passives, and promoters.

5s and 6s Account for Most of the Detractor Recommendations

There is some credence to the idea that people who feel neutral, or even nominally above neutral (5 and 6), may still recommend a company (a point raised by Grisaffe, 2007). We also see some recommending with this data too (see the 5s and 6s in Figure 2, for example). In fact, most of the recommendations from detractors come from 5s and 6s: 64% for the selected brands, 86% for recent purchases, and 77% for recent recommendations all come from 5s and 6s.

Least Likely To Recommend Don’t Recommend

Often the same data, when viewed from another perspective, can be as informative. Another way to look at Table 3 is that very few detectors actually recommended the brand: between 81% and 95% of detractors did not recommend. Taken this further, the least likely to recommend respondents (the 0s to 4s) also really don’t recommend. Table 3 shows between 93% and 99% of the least likely to recommend actually didn’t recommend. This is quite similar to other published findings which found that 88% of respondents who were said they were unlikely to purchase computer equipment didn’t make a purchase, and 95% of “non-intenders” didn’t recommend a TV show. In other words, if people express a low likelihood of recommending, they probably won’t recommend.

Correlation Between Intentions and Actions

But if you are wondering who’s most likely to recommend, your best bet is certainly promoters—especially 10s (between 30–62%; see Table 3). After the promoters, a non-trivial number of passives and a few detractors (mostly 5s and 6s) will also recommend.

In general, as LTR scores go up, reported recommendation rates follow as Figures 1 and 2 show. This correlation can be described using the correlation coefficient r, which shows a strong correlation. For the selected brands the correlation between LTR and recommendations is r = .90, for recent purchases r = .79, and for recent recommendations r = .69.

However, the large spike at 10 makes the relationship noticeably non-linear and reduces the correlation coefficient’s ability to accurately describe this as a non-linear relationship (it most likely underestimates it). Better modeling this non-linear relationship is the subject of future research.

Summary & Takeaways

Using both a single-point survey and a longitudinal study we compared likelihood-to-recommend scores on several companies to self-reported recommendations at 30, 60, and 90 days. We found

Between 28% and 50% of people report recommending. The percent of respondents who reported recommending the company or product varied from a low of 28% across common brands, 37% for the most recently recommended product, and 50% for the most recent purchase. The 29% recommend rate for consumer software customers also falls within this range. The rate dipped lower when looking at specific brands with smaller sample sizes (e.g. Enterprise, Walmart and Best Buy).

Customers who respond 10 tend to recommend. We saw in both datasets we analyzed that respondents who answered 10, the “top-box” score for likelihood to recommend, accounted for by far the largest portion of self-reported recommendations. There was a much larger difference between the 10s and 9s than between the 9s and 8s, lending credence to the idea that the extreme responders may be a better indicator of behavior (a non-linear relationship).

Most recommendations come from promoters. While at most around half of customers recommend, most recommendations do come from promoters. While passives and detractors still report recommending a company, promoters are between 2 and 16 times more likely to recommend than detractors. The higher a person scores on the 11-point LTR scale, the more likely they are to recommend, with the biggest (non-linear) jump happening between 9s and 10s.

Between 51% and 77% of recommendations come from promoters. Reichheld reported that promoters accounted for 80% of company referrals. Using a similar measure, we found promoters accounted for a smaller, albeit still substantial amount, of self-reported recommendations depending on how the data is analyzed.

Detractors Don’t Recommend. Respondents who indicated they were the least likely to recommend indeed didn’t recommend. Between 81% and 95% of detractors did not recommend and between 93% and 99% of 0 to 4 responses also didn’t report recommending the brand. This asymmetric relationship suggests that if people say they will recommend, they might; if people say they won’t recommend, they almost certainly won’t recommend.

To get 80% of recommendations, you need to include the passives. In each way we examined the percentage of recommendations, promoters, while accounting for most, fell short of the 80% threshold (fluctuating between 51% and 77% depending on what was analyzed). Should you want to increase the chance you are accounting for 80% of future recommendations, include the passive responders (7s and 8s).

This time period was limited but a lot happens in 30 days. One limitation of our analysis was that we had only a 90 day look-back window for participants to have recommended. For some products and services, this may be an insufficient amount of time to allow for recommending. Interestingly though, the 30-day recommend rate was similar to the 60- and 90-day rates, which also matched the software recommendation rate of 29%. This could be an anomaly of our seasonal data collection period (over Christmas). A future analysis can examine a longer time period.

This study was limited to self-reported recommendations. In our analyses, we relied on respondents’ abilities to accurately self-report recommending to friends and colleagues. A future analysis can help corroborate our findings by linking actual recommendations (and ultimately referrals) to likelihood-to-recommend rates.

Purchase and recommending have momentum. One reason we could see more recommendations from promoters is that participants who recommended in the past are likely to continue purchasing and recommending (something we discussed in our NPS study). Our data collection method may be biased toward more favorable attitudes and this in turn may inflate our numbers (which may explain why promoters account for a higher share of the recommendations for recent purchases and recommendations).

Thanks to Lawton Pybus, PhD, for assisting with the data collection.