When we have a good experience with a service or product, we enjoy it, tell our friends, and will probably use that service or product again.

When we have a good experience with a service or product, we enjoy it, tell our friends, and will probably use that service or product again.

But when we have a frustrating or poor experience, such as the occurrence of message boxes relentlessly popping up during sporting events, we hate the product, tell our friends about our bad experience, post it to Twitter, and we probably will not use the service or product again (unless we have little choice).

Understanding a poor experience and eliminating root causes can be an effective strategy for improving the overall experience.

We can take familiar usability metrics and frame them to describe poor experiences.

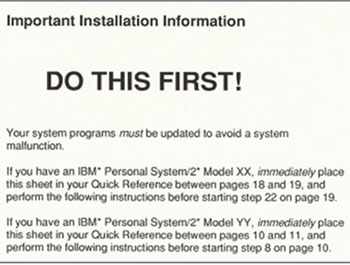

Task Failure: Task completion is the fundamental usability metric. If users cannot complete a task, not much else matters. When we report completion rates, stakeholders usually focus exclusively on the tasks that had high failures rather than high successes. There’s good reason: You want to know what the problems are and how to fix them. In our book, Jim Lewis recounted how 6 out of 8 people failed to read printed instructions placed on top of the packaging material in giant type that said “DO THIS FIRST.” The high failure rate convinced the product team that they needed to fix the product before shipping.

Errors: When users make slips and mistakes, it provides valuable insight into problems in an interface. Those problems can lead to task failure, longer task times, and an overall clumsy experience. We observe a lot of fat-finger typos, overzealous autocorrects and auto-capitalization errors when observing users with mobile applications. Counting and describing errors will help prevent them. You can’t completely eliminate things like typos of course, but you can eliminate steps and unnecessary form fields which cause the typos.

Usability Problems: If you collect only one thing in a usability test it should be a list of problems users had or will have with an interface. The usability problem is often the cause of errors, longer task times, and failed tasks. There’s usually something in the interface, or a misunderstanding of the tasks or user goals, that leads to usability problems. We also recommend analyzing reporting problems in a matrix to estimate how common certain problems are and estimate the percent of problems uncovered.

Long Task Times: When you want to get users to register or sign up for a service—even a free one—every second counts. The longer it takes to fill out a form, the fewer users you will convert to customers. Recording time on task and examining the reasons for the long tasks times can be revealing. Even with unmoderated usability tests, recording a few users can help diagnose interaction problems. Services like Usertesting.com and now MUIQ offer the ability to see what the users are doing even if they aren’t in the lab with you.

Disasters: The combination of task failure and high confidence is a disaster. On an automotive website, if a user finds the wrong value of their car but thinks it’s correct, this is a disaster. It can lead to a real loss of money when selling or trading in and a loss of credibility for the brand that provided the information. Even if the information was correct on the website, does it matter if users find the wrong information and they don’t know it is wrong? Disasters are easy to derive because most usability tests already collect task completion so all you need to do is include the confidence question.

Task Difficulty: We ask the Single Ease Question after each task in a usability test. It’s a 7-point scale, and if a user provides a rating that is lower than a 5, we ask them to describe why they found the task difficult. Summarizing these reasons into discrete buckets provides insights on what to fix.

Detractor Causes: The “likelihood to recommend” question is one of the most popular questions for measuring customer loyalty and generates the ubiquitous Net Promoter Score. We ask the least likely to recommend users, so-called detractors (responses of 0-6), to briefly explain why they aren’t likely to recommend. Like the SEQ reasons in task difficulty, these responses help understand what’s causing the poor experience, what to fix and hopefully how to make the experience better for all of us.